Are your DNS servers still architected like it’s 1999?

DNS hasn’t changed much, but networks have. Your traditional DNS server architecture might be due for some re-thinking to a cascading approach.

Key Takeaways

While technology has drastically evolved in the last 33 years, many DNS server architectures today follow the same networking rulesets and ideas that were cutting edge in 1987. Until about 1999, all of those rules were more or less unchanged when DNS extension mechanisms were introduced.

Is your DNS still architected like it’s 1999?

Even if it is, why change what you’ve always been doing? It’s not wrong—it’s obviously working. But the internet of 1999 is about as relevant today as its breakout star of that year, Napster.

A lot has evolved since then.

This post will explore the traditional primary and secondary DNS server architecture. And it will delve into why it might be due for a redesign to a cascading approach that better reflects today’s networking capabilities.

A small group of IT professionals who are part of our open DDI and DNS expert conversations recently discussed these ideas. All are welcome to join Network VIP on Slack.

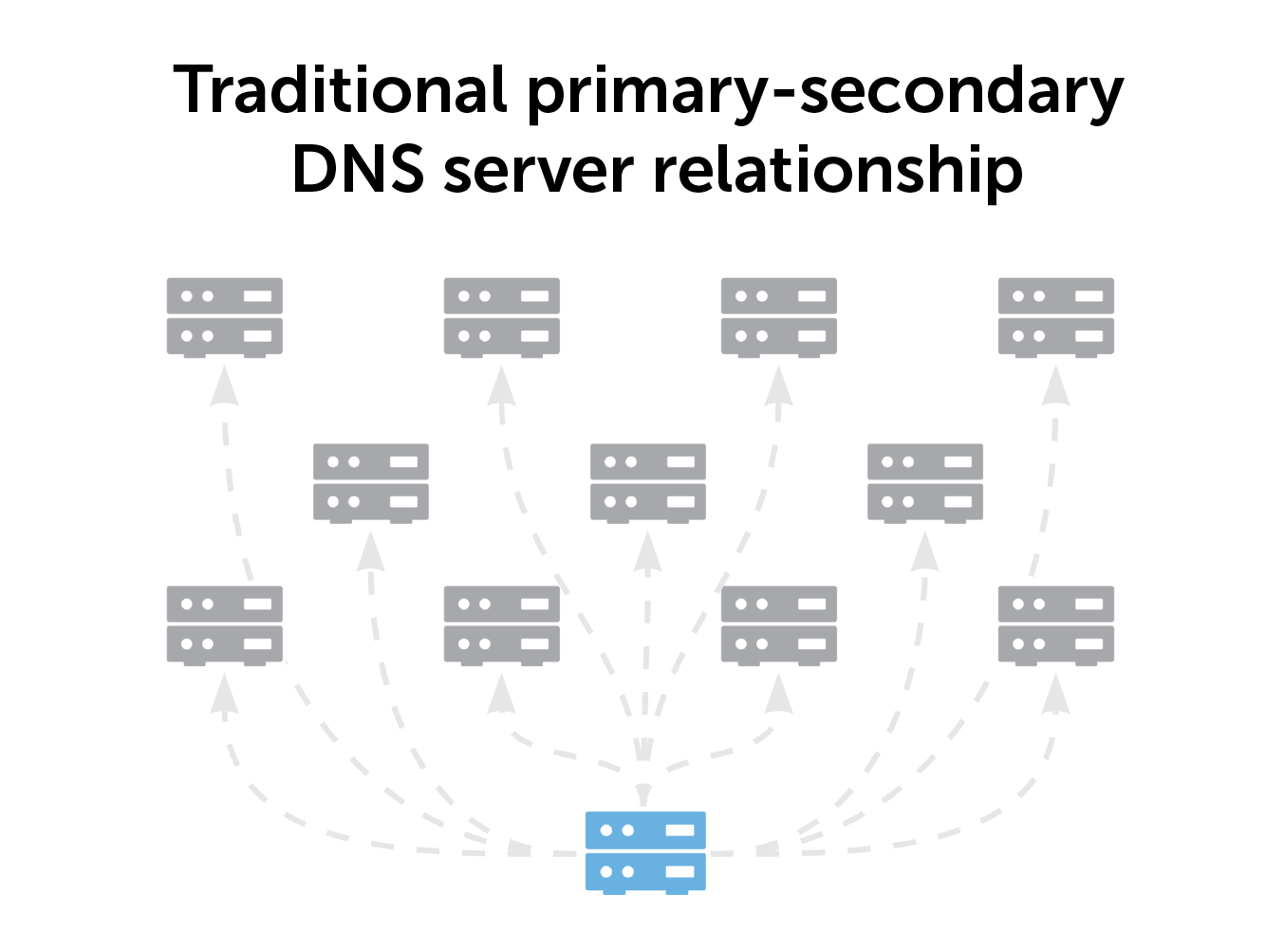

Primary-secondary DNS server relationships

In a traditional DNS server setup, one primary DNS server feeds all the secondary servers in your environment. It’s an architecture that has worked for a very long time, especially for any small environment. However, there are drawbacks.

If the primary server faults, everything is lost. There are copies of DNS records in the secondary servers. But bringing the primary server up again requires manual intervention.

Furthermore, there are capacity limitations. Connecting the primary server to more secondary servers requires more computing power. BlueCat modeling shows that connecting more than 20 secondary servers to your primary server degrades performance. BlueCat doesn’t recommend any more than eight secondaries.

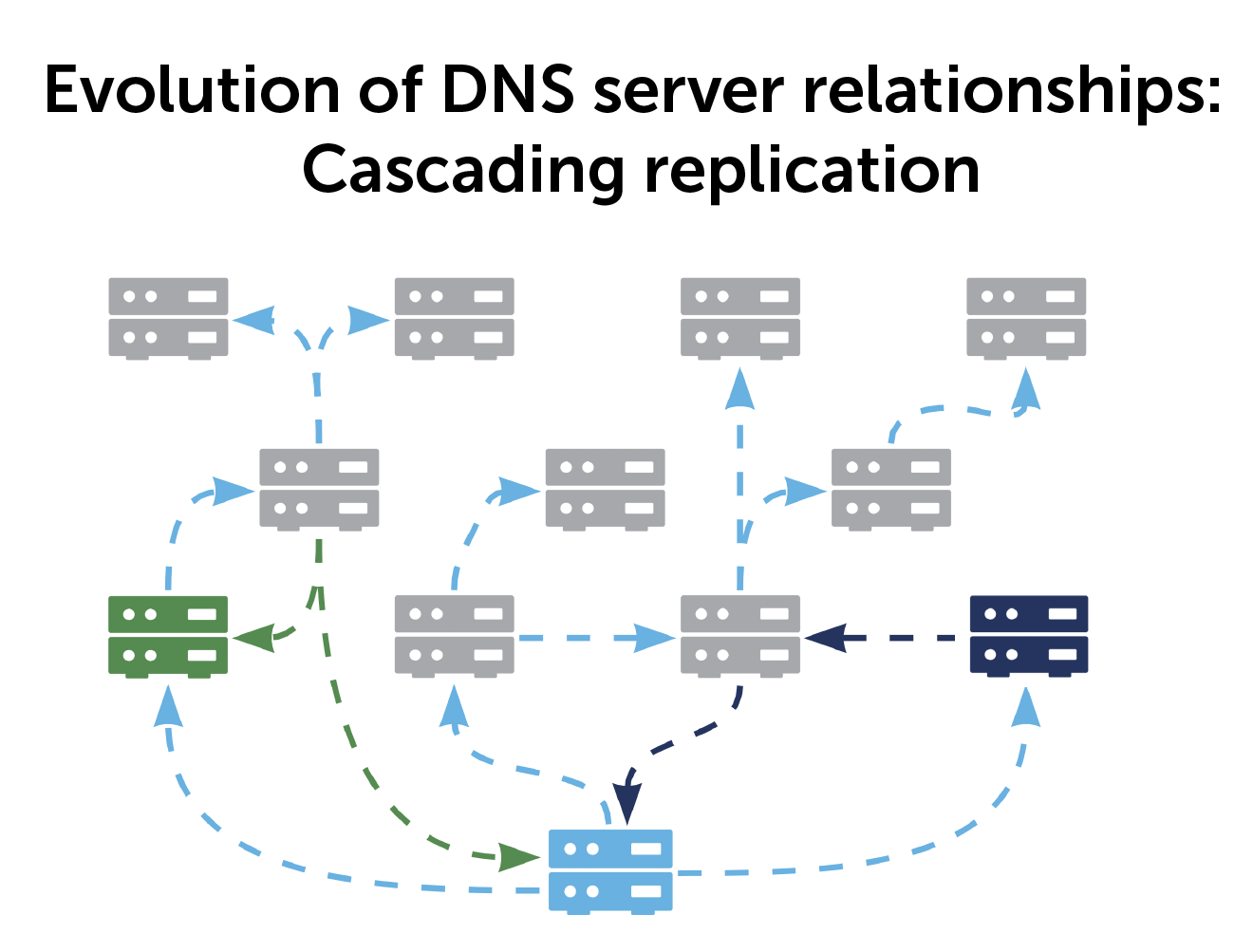

Cascading replication as a DNS server alternative

With a cascading architecture, everything replicates to every server. You can have a spider web or tree branch type of cascade with multiple paths and redundancies.

Imagine a network with 65 DNS servers. You want to replicate them, and the primary server can do 20 servers in parallel. If one synchronization takes 10 seconds, the first 20 servers get updated in 10 seconds. The second 20 get updated in another 10 seconds, then another 10 seconds for the third, and finally the last five. In total, that’s 35 seconds.

Now, take a cascading replication for those 65 servers. A primary has a maximum of eight secondaries (or leaves). The primary updates eight servers in 10 seconds, but then each of those eight can replicate eight of its own leaves in 10 seconds. Replicating finishes in 20 seconds.

While there’s not much of a difference between 20 and 35 seconds, imagine if you had hundreds of servers. The timing differences become significant.

The benefits of a cascading model

With a cascading model, it’s also easier to architect your network into zones or regions. No one central server is responsible for the whole world. Updates do not have to go back to the central server and then propagate out from it.

Think of it instead as one primary server per zone. It could be the same primary for multiple zones, sure. But the idea is to split out various zones to various servers across multiple data centers.

For example, let’s say you have servers in Germany, Japan, and the U.S. And someone registers a web server in Japan. Does Germany and the U.S. need to know about that registration immediately? Probably not. That server is primarily important for the local Japan zone. So, make your updates there first and then propagate from there throughout the globe.

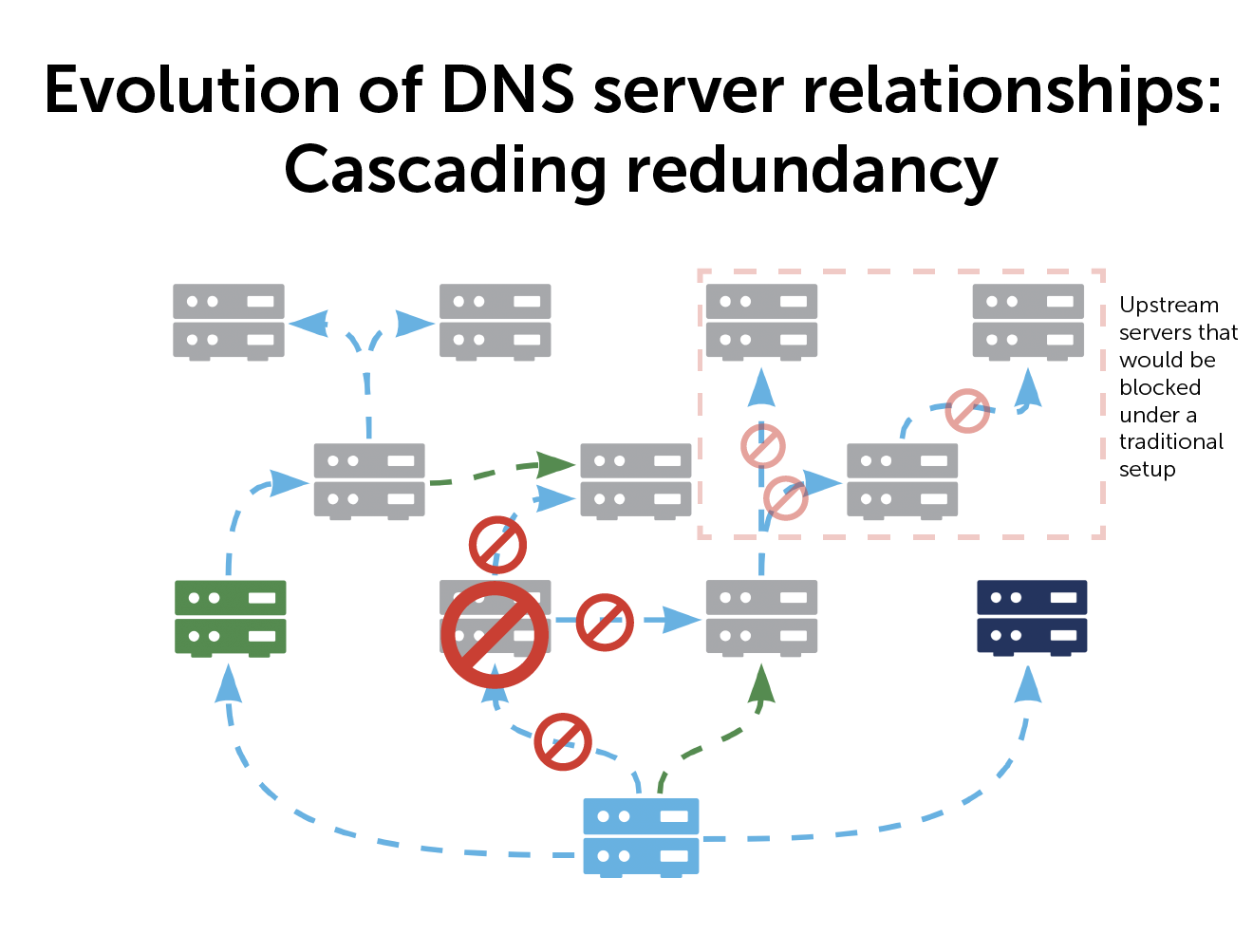

And another thing: Self-healing

Self-healing architecture in DNS is not common, but a cascading architecture makes it possible.

If a server goes down, you have architected and pre-defined the secondary options of communication. As a result, the server needs no human intervention. The moment a server goes down, the server will automatically switch over and the system self heals. It’s zero-touch auto heal.

Why do it this way?

The primary benefit of a cascading model is better redundancies.

In case of a server failure, you bypass it by specifying multiple upstream servers. You can tell any server, ‘this is your primary, if you can’t reach it, try another one.’ You can create secondary, tertiary, and quaternary paths—up to 256 different upstream abilities. As long as one server in your path is working, you can still keep your system up to date.

If your primary server goes down it is just for that zone, minimizing the impact on your global network. And you can move that zone to any other primary server to keep things humming. Every one of your clients and servers is still getting all the information, just via a different path.

All of this is to not say that a cascading architecture is right for everyone. If your network is small, a traditional primary-secondary architecture may be exactly what you need. The larger your enterprise, the more benefits you will reap from a cascading model.

However, if your DNS server architecture might need some updating, learn how the BlueCat platform can help.