IT pros debate: Should you DIY your DDI?

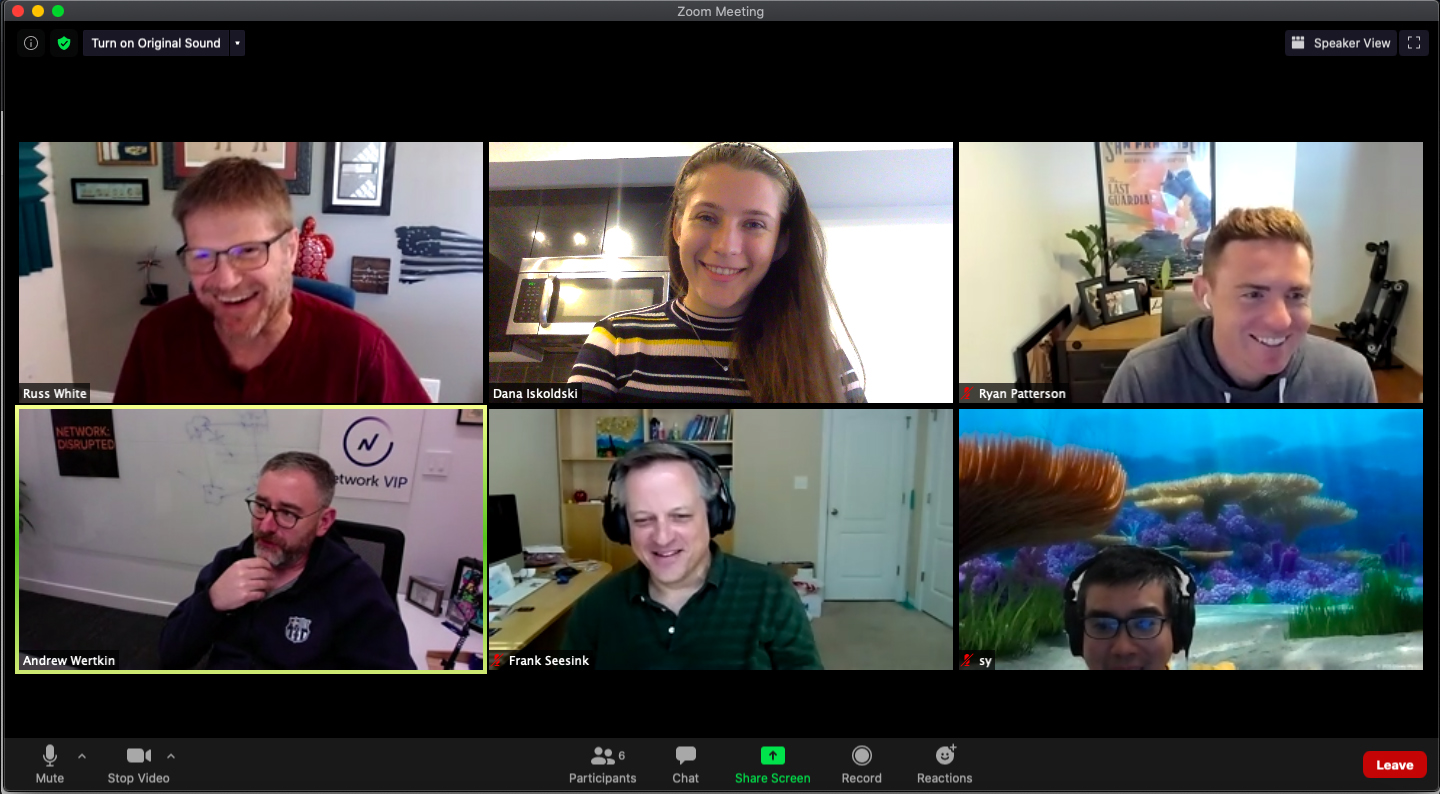

Five IT pros get real about DIY vs. enterprise DNS solutions during the second Critical Conversation on Critical Infrastructure hosted in Network VIP.

Key Takeaways

Critical Conversations on Critical Infrastructure Ep. 2: “Should you DIY your DDI?”

The question poses a bit of a false dichotomy, as if the right answer is either yes or no. In reality, the truth is somewhere in the middle. Whether your organization ought to DIY its DNS, DHCP, and IP address management (together known as DDI) depends on a lot of factors. (And in this case, ‘DIY’ means skipping an enterprise-grade solution in favor of a homegrown system that pulls together BIND, Active Directory, and/or anything else.)

A diverse group of pros from across IT were invited to discuss both philosophical and practical answers to the question in an open forum.

Moderator: Andrew Wertkin [LinkedIn | Twitter], Chief Strategy Officer, BlueCat and host of Network Disrupted podcast

Panelist: Russ White [LinkedIn | Twitter], Infrastructure Architect, Juniper Networks

Panelist: Sy Na [LinkedIn], Network Engineer, Robinhood

Panelist: Frank Seesink [LinkedIn], Senior Network Engineer, higher education industry

Panelist: Ryan Patterson [LinkedIn | Twitter], System Engineer, Uber

Below is a list of the most important, helpful messages to come out of BlueCat’s second installment of Critical Conversations on Critical Infrastructure. To continue the conversation, follow up with panelists, and see what others have said about the roundtable, join Network VIP—our Slack community where pros in IT connect and share their expertise on all things networking.

Lesson #1: Respect the network’s humble beginnings.

“Having been in the industry long enough, I see it more as a continuum than a company deciding to do one thing or the other right from the start,” Sy Na reminded us.

“Most people, when they start from a start-up (like 10 or 20 people), there’s going to be an AirPort Express, Linksys, or whatever they have running at home [for] running DHCP, DNS.

“Most of the time, the founders of the company rarely think about this. They just do whatever, and then it’s not until they get to the point where we need to hire someone to manage this that any thought is put into any of this.”

Lesson #2: Know when you need to start the strategic DDI conversation.

Ryan Patterson put it fairly bluntly: “When people start talking about DDI is when you need to start thinking about DDI.”

To add a bit more specificity, Russ White added, “In a data center, [haphazard DDI] becomes a thing when it impacts performance of applications. In the rest of the world, it becomes a thing when it doesn’t work anymore and somebody’s got to go fix it, and it has to be fixed enough that it’s a problem.”

Essentially, there’s an obvious and often painful tipping point that necessitates asking questions. Should we be more strategic about DDI? How should we approach it?

The value of that conversation is clear. Frank Seesink explained, “I am working, from my perspective, in an organization that actually has a DDI architect. That’s his title. That’s his role, which shows you the emphasis they put on it. They value that, and that matters to me. That’s why if you look at my career, I don’t have a lot of job hops. But when I go, I go to places that are smart enough to realize true value. That’s not an easy calculation all the time.”

Lesson #3: Network complexity goes by many aliases.

If you were to zoom out and look at the issues that plague a network or organization, you could boil them down into one word: complexity. However, from the vantage point of individuals or even functional teams, it often gets part-diagnosed as something else.

These are just some of complexity’s aliases:

- Silos. Many teams within networking don’t collaborate or communicate as well as they ought to. “The absolute truth we forget,” said White, “is that the networking guys really just barely understand DNS, and the DNS guys just barely understand the network.”

- Limited deep expertise. Andrew Wertkin explained, “There are few experts, and there’s a lot of people with their hands in the pot.” “We had two people—just two people—who understood that system,” Seesink said of a previous job.

- Number and diversity of sites. “Complexity is not always correlated to the number of people,” explained White, “but it is a rough measure.” Na added, “About 500 to 1,000 people is usually when that /24 just doesn’t cut it anymore.” Wertkin added, “A lot of people think about it in terms of number of devices as well.”

- Manual work. Seesink continued to recall [traumatic] memories of unscalable, error-prone processes from a past job. “We were managing IP addresses in a spreadsheet that I referred to as a spreadsheet from hell. That lasted way longer than it should have. It’s also an unscalable solution from the IP address management side, because if you’re using a spreadsheet, even if you put it on network share, only one person can edit it at a time. It’s a nightmare. You add in DNS to it—how are we going to manage this?”

- Tension between a need for change and stability. “There’s the critical infrastructure paradox,” explained Wertkin. “It needs to be up. And there’s an assumption that the best way to keep something reliable is to keep it somewhat static. Yet, the stuff needs to change all the time now. [We often hear,] ‘this stuff needs to change rapidly, yet I don’t want it to break.’”

There are more. Legacy infrastructure, variability between technology stacks across an organization, the list goes on.

Simplicity is hard, right?

Lesson #4: To tame complexity, take your blinders off.

You can start by understanding how the entire system works.

White pointed out, “We [in IT] don’t take a systemic view of these things. We take a piece-by-piece view… I think that’s a really major problem for us.”

Wertkin added, “It’s tough for people to do that, because a lot of people just have their blinders on.”

That’s why with his company’s recent migration, said Patterson, “We sat down with all those teams around us that utilize the [DDI] service and asked them what their ‘plus one’ was. So that we made sure to accommodate their growth for a long period of time, but also made it as simple as possible. That’s the end goal, right? You don’t want to sit here and have this complex web.

“I recommend everybody just taking a look at your infrastructure, just from where the DHCP packet comes in, and then just go step by step in understanding and seeing where you could make those simplifications.

“Now, with our current design, one of the cool things that we did was any office can touch any DHCP server in our infrastructure. So, we can encounter DNS outages across multiple data centers and still be able to get on IPAM, move those DHCP servers somewhere else, and we can have one region hosting an entire different region at this point. Just by looking at each little component and understanding the service itself, you’re providing a service to everybody else, because they will never lose DHCP. They will never lose DNS as long as that office has an internet connection.”

[Editor’s note: Patterson works for Uber, which recently completed the process of migrating to BlueCat from a homegrown solution.]

Lesson #5: Tradeoffs rarely hide in plain sight.

“If you haven’t found the tradeoffs, you haven’t looked hard enough,” said White.

He added, “For me, for DDI, a lot of it’s going to come down to, what’s your telemetry look like? What’s your performance look like internally versus externally? Do you want to push everything to a cloud-based service? Do you want to run a commercial service versus running BIND or something that you’ve rolled yourself?”

Seesink also reminded us, “Cost is a very amorphous term. For some people, cost is money. For other places, it’s manpower. Do you have the skillset?” At his last workplace, he recounted, “Even in an organization that small, in the end, it was far better to not do the DIY because it gave us more flexibility. Also, it offloaded certain workloads. In the long run, it saved a lot more money. But it was hidden money.

“We suddenly had almost 10 people that could easily go in and make changes as needed. The really nice part is you have accountability, because you have audit trails for every change that’s made. If somebody complains that their website disappeared, you could find who made that change. Which, in most of those systems, like BIND, good luck.

“For a lot of folks, do you build your own car? No. We go buy a car. Why? Because Honda makes thousands of them a day. There’s a boundary point to it, but sometimes it’s getting that box and paying that upfront cost. Like I said, there is the hidden cost behind it, but there’s also that expertise that’s going into it.”

“The argument that I don’t want to make is that you couldn’t do this stuff with DIY,” Wertkin clarified. “Because, of course you can. I think the question just comes into how much of that cost you’re absorbing versus utilizing a vendor to absorb it.”

Patterson added his two cents. “One of the reasons why we ended up making the transition to BlueCat [was] because the time and effort we would have to put in to create our own DDI solution just to meet the features that BlueCat provided well out-cost the time in doing it ourselves.”

That’s all, folks. This has been the second in a series of Critical Conversations about Critical Infrastructure that was so rich with helpful insight. Coming up on December 8, the Network VIP community will be exploring the question, “Who should own DNS in the cloud?” Tune in and participate in the thoughtful discussion on Slack.